Introduction

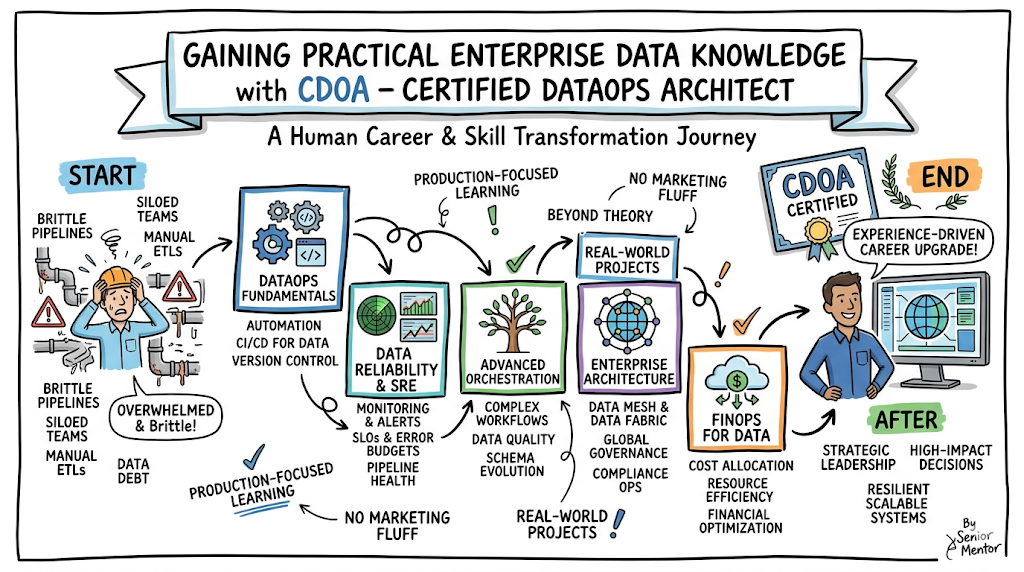

The CDOA – Certified DataOps Architect program is a comprehensive professional framework designed to bridge the gap between data engineering and operational excellence. This guide is written for software engineers, data professionals, and platform architects who need to navigate the complexities of modern data pipelines and automated governance. In the current era of high-velocity data, understanding how to apply DevOps principles to data management is no longer optional for those aiming for senior technical roles.

As organizations move toward cloud-native architectures, the role of a DataOps Architect has become central to scaling business intelligence and machine learning operations. This guide helps professionals make better career decisions by clarifying the skills required to succeed in a data-driven landscape. By choosing to train through Dataopsschool, engineers gain access to a curriculum that emphasizes production-grade stability and scalable data delivery. This document provides a clear roadmap for professionals to evaluate their current skills against industry standards.

What is the CDOA – Certified DataOps Architect?

The CDOA – Certified DataOps Architect is a professional designation that validates an individual’s ability to design, implement, and manage automated data environments. Unlike traditional data management courses that focus purely on SQL or database administration, this certification emphasizes the operational side of data. It focuses on creating a seamless lifecycle for data, from ingestion to consumption, ensuring that every stage is monitored, tested, and reproducible.

This program exists because modern enterprises struggle with data debt and brittle pipelines that break whenever a schema changes. The certification focuses on real-world, production-focused learning, moving beyond theoretical concepts to address the actual challenges of data drift and pipeline orchestration. It aligns perfectly with modern engineering workflows by treating data infrastructure as code, enabling teams to deploy data products with the same speed and reliability as software updates.

Who Should Pursue CDOA – Certified DataOps Architect?

This certification is designed for a broad spectrum of technical professionals, ranging from data engineers who want to automate their workflows to SREs tasked with maintaining data reliability. Cloud professionals and platform engineers will find the architectural principles particularly useful when building multi-cloud data platforms. It is also highly relevant for security professionals who must integrate automated compliance and data masking into the delivery pipeline.

For beginners in the data space, it provides a structured entry point into the operational side of the industry, while experienced engineers can use it to formalize their knowledge. Managers and technical leaders should pursue this track to better understand how to structure their teams and reduce the time-to-market for data-driven insights. In both the Indian and global markets, there is a massive demand for professionals who can bridge the silo between data science and IT operations.

Why CDOA – Certified DataOps Architect is Valuable and Beyond

The demand for DataOps expertise is growing as enterprises realize that data is their most valuable yet most difficult asset to manage. As organizations move away from manual ETL processes toward automated, self-healing pipelines, the longevity of a DataOps-focused career is virtually guaranteed. This certification helps professionals stay relevant even as specific tools change, because it teaches the underlying principles of lean manufacturing applied to data flows.

Earning this credential demonstrates a commitment to high-quality engineering standards and operational maturity. It offers a significant return on time and career investment by positioning the holder as a specialist capable of reducing operational overhead and improving data quality. Enterprises are increasingly adopting DataOps to support their AI and analytics initiatives, making this architect role one of the most stable and high-paying positions in the current infrastructure landscape.

CDOA – Certified DataOps Architect Certification Overview

The CDOA program is delivered via a structured learning portal and is hosted on the primary provider website. The program is structured into distinct levels of proficiency, moving from fundamental concepts to complex architectural design. It uses a practical assessment approach, requiring candidates to demonstrate their understanding of how various components of a data stack interact under production pressure.

Ownership of the certification process ensures that the curriculum stays updated with the latest industry trends, such as data mesh and data fabric architectures. The structure is designed to be accessible to working professionals, offering a clear path from associate-level knowledge to expert-level mastery. By focusing on practical application rather than just memorization, the program ensures that certified individuals are ready to contribute to enterprise projects immediately.

CDOA – Certified DataOps Architect Certification Tracks & Levels

The certification tracks are divided into three primary tiers: Foundation, Professional, and Advanced. The Foundation level introduces the core concepts of the DataOps manifesto, version control for data, and basic pipeline automation. The Professional level dives deeper into specific specializations such as SRE for Data, FinOps for Cloud Data, and automated testing frameworks for large-scale datasets.

The Advanced level, or the Architect tier, focuses on high-level strategy and the integration of data operations across the entire enterprise. It covers topics like cross-functional team structures, global data governance, and the financial optimization of data platforms. These levels are designed to align with career progression, allowing an engineer to grow from a contributor to a technical leader who can design complex, resilient systems.

Complete CDOA – Certified DataOps Architect Certification Table

| Track | Level | Who it’s for | Prerequisites | Skills Covered | Recommended Order |

| DataOps Foundation | Associate | Beginners & Junior Engineers | Basic Data Knowledge | CI/CD, Version Control, Data Basics | 1st |

| DataOps Specialist | Professional | Data Engineers & SREs | Foundation Cert | Automated Testing, Orchestration | 2nd |

| DataOps Architect | Advanced | Senior Engineers & Leads | Professional Cert | Governance, Mesh, Strategy | 3rd |

| DataOps Security | Professional | Security & Cloud Engineers | Foundation Cert | Data Masking, Compliance Ops | Optional |

| DataOps FinOps | Professional | Managers & Cloud Architects | Foundation Cert | Cost Management, Resource Optimization | Optional |

Detailed Guide for Each CDOA – Certified DataOps Architect Certification

CDOA – DataOps Associate Foundation

What it is

This certification validates a professional’s understanding of the fundamental principles of DataOps and the agility required in modern data management. It introduces the core philosophy of bringing automation to the data lifecycle and explores the basic tools needed to build consistent, reproducible pipelines.

Who should take it

It is suitable for junior data engineers, software developers transitioning into data roles, and technical project managers who need to oversee data teams. No deep prior experience in automation is required, making it an ideal starting point for anyone looking to enter the operational side of data engineering.

Skills you’ll gain

- Understanding the DataOps Manifesto and its core values.

- Implementing basic version control for data schemas and scripts.

- Creating simple automated data pipelines using common orchestration tools.

- Monitoring data flows for basic uptime and performance metrics.

Real-world projects you should be able to do

- Set up a version-controlled repository to manage SQL scripts and data definitions.

- Build a basic CI/CD pipeline that triggers a data load on a code commit.

- Configure simple alerts for pipeline failures using standard monitoring tools.

Preparation plan

- 7-14 Days: Focus on the DataOps Manifesto and basic terminology of data engineering and automation.

- 30 Days: Practice setting up local environments and experimenting with version control for data scripts.

- 60 Days: Deep dive into the official course materials and take mock exams to identify knowledge gaps.

Common mistakes

- Underestimating the importance of cultural change and focusing only on technical tools.

- Ignoring the role of version control in maintaining consistent data management.

- Failing to understand how DataOps differs from traditional DevOps workflows.

Best next certification after this

- Same-track option: DataOps Specialist Professional

- Cross-track option: SRE Associate

- Leadership option: Technical Team Lead Foundation

CDOA – DataOps Specialist Professional

What it is

This level validates a deeper technical expertise in managing production-grade data environments and complex automation strategies. It focuses on the implementation of automated testing, sophisticated orchestration patterns, and robust data quality frameworks that ensure enterprise reliability.

Who should take it

This is intended for working Data Engineers, SREs, and Platform Engineers who are responsible for the day-to-day operation of enterprise data platforms. Candidates should have a solid understanding of cloud infrastructure and at least one primary data processing language.

Skills you’ll gain

- Designing and implementing automated data quality tests.

- Orchestrating complex multi-stage pipelines with dependencies.

- Implementing Infrastructure as Code for data platforms.

- Managing data drift and schema evolution in production environments.

Real-world projects you should be able to do

- Deploy an automated testing suite that validates data integrity before loading into a warehouse.

- Create a self-healing pipeline that can restart or alert based on specific error types.

- Use automation tools to provision a complete data environment in the cloud.

Preparation plan

- 7-14 Days: Review advanced orchestration patterns and testing strategies for large datasets.

- 30 Days: Work through hands-on labs involving cloud data warehouses and continuous integration tools.

- 60 Days: Focus on troubleshooting real-world scenarios and optimizing pipeline performance for scale.

Common mistakes

- Focusing too much on one specific tool rather than the underlying workflow logic and principles.

- Neglecting the security and compliance aspects of moving data across different environments.

- Creating overly complex pipelines that are difficult for other team members to maintain or troubleshoot.

Best next certification after this

- Same-track option: Certified DataOps Architect

- Cross-track option: Certified DevSecOps Professional

- Leadership option: Engineering Manager Track

CDOA – Certified DataOps Architect

What it is

The Architect level is the highest tier, focusing on the strategic design and management of complex data ecosystems across the enterprise. It validates the ability to lead large-scale transformations and design federated data architectures that support global business objectives.

Who should take it

Senior Engineers, Lead Architects, and Technical Directors who are responsible for the long-term technical strategy and organizational structure of data teams. Candidates should have extensive experience in both data engineering and cloud operations.

Skills you’ll gain

- Designing federated data governance models for complex organizations.

- Implementing Data Mesh and Data Fabric architectures.

- Strategic financial management of large-scale data platforms.

- Leading organizational change toward a mature DataOps culture.

Real-world projects you should be able to do

- Design a multi-region data architecture that complies with global sovereignty laws.

- Develop a corporate-wide DataOps strategy that integrates multiple business units.

- Conduct a financial audit of data infrastructure and implement cost-saving automations.

Preparation plan

- 7-14 Days: Study high-level architectural patterns and enterprise governance frameworks.

- 30 Days: Analyze case studies of successful enterprise-scale DataOps implementations.

- 60 Days: Develop a comprehensive architectural project that demonstrates mastery of all certification concepts.

Common mistakes

- Losing sight of the business value while focusing on architectural purity and complexity.

- Failing to account for the human and organizational barriers to DataOps adoption.

- Designing architectures that are too rigid and do not allow for rapid iteration.

Best next certification after this

- Same-track option: Advanced Data Strategy Expert

- Cross-track option: FinOps Certified Practitioner

- Leadership option: Chief Technology Officer Program

Choose Your Learning Path

DevOps Path

Professionals in this path focus on integrating data pipelines into existing software delivery cycles seamlessly. The goal is to treat data as just another component of the application, ensuring that database migrations and updates are as automated as code deployments. This path is ideal for engineers who want to break down the silos between the application and the database teams. It focuses heavily on CI/CD for data and automated environment provisioning.

DevSecOps Path

This path emphasizes the integration of security and compliance into every stage of the data lifecycle. Practitioners learn how to automate data masking, manage encryption keys at scale, and ensure that sensitive information is never exposed in lower environments. It is critical for organizations operating in highly regulated industries like finance or healthcare. This ensures that the data pipeline is not just fast, but also secure and auditable.

SRE Path

The SRE path for data focuses on the reliability, scalability, and performance of data platforms at scale. Engineers in this track apply Site Reliability Engineering principles—such as Error Budgets and SLOs—to data pipelines. This ensures that data delivery is not just fast, but consistently dependable for business-critical decision-making. It covers incident management for data and the creation of highly available data architectures.

AIOps Path

In the AIOps path, professionals learn how to use artificial intelligence and machine learning to improve IT operations. This involves using data-driven insights to predict outages, automate incident response, and optimize system performance. It focuses on applying DataOps principles to the massive streams of telemetry data generated by modern infrastructure. This path is essential for managing the complexity of modern, distributed cloud environments.

MLOps Path

The MLOps path is specifically tailored for the lifecycle management of machine learning models in production. It covers the transition of models from research to production, ensuring that data used for training is consistent with production data. This path addresses the unique challenges of model versioning, feature stores, and automated retraining. It is the bridge between data science and operational engineering excellence.

DataOps Path

This is the core path for those dedicated to the modernization of data engineering and delivery. It covers the full spectrum of data management, from source to consumption, focusing on automation and quality. Professionals in this track become the primary architects of the data-driven enterprise, enabling faster and more accurate insights. It is the most comprehensive path for building a career in modern data operations.

FinOps Path

The FinOps path addresses the financial management and optimization of data infrastructure in the cloud. As data volumes grow, costs can spiral out of control if not managed with a strategic, automated approach. This path teaches engineers how to design cost-effective data storage and processing strategies. It ensures the data platform remains sustainable and provides a clear return on investment for the organization.

Role → Recommended Certifications

| Role | Recommended Certifications |

| DevOps Engineer | DataOps Foundation, DataOps Specialist |

| SRE | DataOps Specialist, SRE Professional |

| Platform Engineer | DataOps Architect, Cloud Architect |

| Cloud Engineer | DataOps Foundation, FinOps Practitioner |

| Security Engineer | DataOps Security, DevSecOps Professional |

| Data Engineer | DataOps Specialist, DataOps Architect |

| FinOps Practitioner | DataOps FinOps, Cloud FinOps |

| Engineering Manager | DataOps Foundation, DataOps Architect |

Next Certifications to Take After CDOA – Certified DataOps Architect

Same Track Progression

Once you have achieved the Architect level, the next logical step is to dive deeper into specialized data domains. You might pursue advanced credentials in data science or specialized big data engineering to compliment your operational skills. This ensures you remain a subject matter expert who can handle both the infrastructure and the data logic itself. Continuous learning in this track keeps you at the forefront of data technology.

Cross-Track Expansion

Broadening your skills into adjacent fields like cybersecurity or cloud architecture is highly recommended for architects. A DataOps Architect with a strong understanding of DevSecOps or FinOps is incredibly valuable to any global enterprise. This cross-training allows you to see the big picture of how data fits into the wider organizational technology stack. It makes you a more versatile leader who can speak multiple technical languages.

Leadership & Management Track

For those looking to move away from hands-on engineering, the transition into technical leadership is a natural progression. Moving toward a Director of Platform Engineering or a Head of Data Operations role involves shifting focus to team strategy. Your background in DataOps will provide the operational foundation needed to lead high-performing teams. This track focuses on budgeting, hiring, and aligning technical goals with business outcomes.

Training & Certification Support Providers for CDOA – Certified DataOps Architect

DevOpsSchool is a leading global institution that provides extensive training programs for modern engineering disciplines including DataOps. They offer structured courses that cover the full spectrum of the certification track, from foundation to advanced levels. Their curriculum is designed by industry experts and focuses on providing practical, hands-on experience through real-world projects. Students benefit from a strong community and dedicated mentorship that helps them navigate their career paths effectively. The school is known for its focus on production-ready skills that are immediately applicable in an enterprise setting.

Cotocus specializes in delivering high-end technical training and consulting services for enterprise teams looking to modernize. They focus on providing customized learning experiences that align with the specific needs and goals of an organization. Their instructors are seasoned professionals who bring a wealth of practical knowledge to the classroom. By choosing this provider, engineers gain a deep understanding of how to implement complex automation strategies in production environments. Their training is highly regarded for its depth and its focus on solving actual architectural challenges.

Scmgalaxy is a comprehensive resource hub and training provider for software configuration management and DevOps professionals worldwide. They offer a vast library of tutorials, webinars, and certification programs that help engineers stay updated with the latest industry trends. Their focus is on building a strong community of learners who can share best practices and solve complex technical challenges together. It is an excellent choice for those seeking continuous professional development and a wealth of documentation. Their approach ensures that students are well-supported throughout their entire learning journey.

BestDevOps provides targeted training programs that focus on the most in-demand skills in the current global job market. They emphasize a practical, lab-based approach to learning, ensuring that students can apply what they learn in the classroom to their day-to-day work. Their courses are designed to be accessible to professionals at all levels, from beginners to advanced architects. They are known for their high success rates in helping students achieve professional certifications and career advancements. The instructors provide a clear path to mastery through repetitive, hands-on practice.

Devsecopsschool.com focuses exclusively on the integration of security into the DevOps and DataOps lifecycle for technical teams. They provide specialized training that covers everything from secure coding practices to automated compliance monitoring and data masking. Their programs are essential for any professional looking to specialize in the security aspects of the data delivery pipelines. The curriculum is constantly updated to address the latest security threats and international standards. This provider helps engineers build a mindset where security is a primary consideration in every technical decision.

Sreschool.com is dedicated to the discipline of Site Reliability Engineering and its application to modern infrastructure. They offer in-depth courses that teach engineers how to build and maintain highly reliable systems that can scale. The training covers essential SRE concepts like error budgets, incident management, and automated system monitoring. This is an ideal provider for those looking to apply reliability principles to their data operations and large-scale platforms. Their focus on stability and uptime is critical for any enterprise-level DataOps implementation.

Aiopsschool.com provides cutting-edge training on the application of artificial intelligence to improve IT and data operations. Their programs help professionals understand how to leverage data and machine learning to automate complex operational tasks. As organizations generate more telemetry data than humans can process, the skills taught here are becoming increasingly critical for architects. It is the perfect choice for forward-thinking engineers who want to lead the next wave of automation. Their training bridges the gap between traditional operations and futuristic AI solutions.

Dataopsschool.com is the primary provider for certifications focused specifically on the operational side of data management and architecture. They offer a structured path from associate to architect levels, ensuring a comprehensive understanding of DataOps principles. Their training is recognized globally and is highly valued by enterprises looking to modernize their data platforms. The focus remains entirely on production-grade excellence and scalable data delivery. This provider is the standard-bearer for the DataOps movement and the CDOA certification itself.

Finopsschool.com addresses the critical need for financial management and cost transparency in the cloud era. They provide training that helps engineers and managers understand the cost implications of their technical and architectural decisions. Their programs cover cloud cost optimization, resource tagging, and financial reporting for technical teams. This provider is essential for any professional looking to master the economic side of modern cloud and data infrastructure. They help organizations ensure that their technical growth is financially sustainable and efficient.

Frequently Asked Questions (General)

- How difficult is it to achieve the CDOA certification?

The difficulty level varies depending on the specific track you choose. The Foundation level is accessible to most with a basic technical background, while the Architect level requires significant experience and a deep understanding of complex system design and strategy.

- How much time should I dedicate to preparing for the exam?

For the Foundation level, 30 days of consistent study is usually sufficient for most candidates. For the Professional and Architect levels, we recommend at least 60 to 90 days of preparation, including hands-on lab work and case study analysis.

- Are there any prerequisites for taking the Foundation exam?

There are no formal prerequisites for the Associate level, though a basic understanding of data engineering and cloud concepts is highly recommended to ensure you can follow the course material effectively.

- What is the return on investment for this certification?

Professionals who hold these certifications often see a significant increase in their marketability and earning potential. Enterprises value the validated ability to manage complex data operations and reduce operational costs through automation.

- Can I take the exams online?

Yes, most certification programs offer an online proctored exam option, allowing you to complete the assessment from your home or office while maintaining the high integrity of the certification process.

- Do I need to renew the certification periodically?

Most professional certifications in this field require renewal every two to three years to ensure that your skills remain current with the latest industry developments and changing tool ecosystems.

- Is there a specific order I should follow for the certifications?

We strongly recommend starting with the Foundation level and progressing through the Specialist and Architect levels in order. This ensures a solid understanding of the basics before moving to high-level architectural strategy.

- How does DataOps differ from traditional Data Engineering?

While Data Engineering focuses on building pipelines, DataOps focuses on the automation, testing, and operational reliability of those pipelines throughout their entire lifecycle, from development to production.

- Are these certifications recognized in the Indian market?

Yes, these credentials are highly recognized by both Indian tech firms and global multinational corporations operating in India, as the demand for specialized DataOps talent is universal across the technology sector.

- What tools will I learn during the training?

The training focuses on principles but uses common industry tools like Airflow, Jenkins, Terraform, and various cloud-native data services to demonstrate how these principles are applied in real-world scenarios.

- Is coding experience required for these certifications?

While deep software engineering skills aren’t always required for every level, a basic understanding of scripting and SQL is essential for the practical portions of the training and exams.

- Can managers benefit from these technical certifications?

Absolutely. Technical managers and leaders gain a much better understanding of how to structure their teams and evaluate the technical decisions made by their architects through this structured learning.

FAQs on CDOA – Certified DataOps Architect

- What is the primary focus of the CDOA – Certified DataOps Architect program?

The CDOA focuses specifically on the operational lifecycle and architectural design of data systems. It teaches how to build resilient, automated environments that can handle enterprise-scale data flows without constant manual intervention or breakage.

- Does this certification cover multi-cloud and hybrid data architectures?

Yes, the architect level focuses heavily on designing systems that work across various environments. This includes managing data movement and consistency between on-premises servers and multiple cloud providers like AWS, Azure, and Google Cloud.

- How does the program address data governance and compliance?

Governance is a core pillar of the curriculum. It teaches you how to automate compliance checks, implement data lineage tracking, and ensure that security policies are enforced programmatically rather than through manual, error-prone audits.

- Is there a focus on cost optimization in the track?

A significant portion of the Advanced track is dedicated to the financial side of operations. You will learn how to design architectures that are high-performing yet cost-effective, ensuring maximum value from infrastructure investments.

- How does the certification prepare me for roles in AI?

The CDOA provides the operational skills needed to support MLOps. By mastering data delivery and quality, you ensure that the data feeding into AI models is reliable and timely, which is critical for success.

- What kind of real-world scenarios are covered in assessments?

Assessments involve troubleshooting failed pipelines, designing schema evolution strategies, and creating automated testing frameworks for diverse datasets. These scenarios are based on real problems faced by data teams in production environments globally.

- Can I specialize in a specific industry within the track?

While the principles are universal, the training provides frameworks adaptable to any sector. The security and governance modules are particularly relevant for those working in regulated industries like finance where data privacy is paramount.

- How does this certification help in building a Data Mesh?

The CDOA provides the technical and organizational blueprints needed to implement a Data Mesh. It covers how to create decentralized data domains with centralized infrastructure and governance, which is essential for modern, scalable strategies.

Final Thoughts: Is CDOA – Certified DataOps Architect Worth It?

From a mentor’s perspective, the value of a certification isn’t found in the title itself, but in the discipline and structured thinking it forces you to adopt. The CDOA program is not about learning a specific tool; it is about learning a better way to work. In an industry where data volumes are exploding, the old manual ways of managing databases and pipelines are no longer sustainable.

If you are looking to move into a senior leadership or architectural role, this path provides the necessary vocabulary and framework to lead major technical transformations. It moves you away from being a firefighter who constantly fixes broken pipelines to being an architect who builds systems that don’t break. For any serious engineer or manager in the cloud and data space, this is a highly practical and worthwhile investment in your professional future.